PineDrama and Tadka: what TikTok and JioHotstar's microdrama bets mean for your video stack

In early 2026, Omdia and The Streaming Wars reported that the leading vertical drama app in the US was clocking 35.7 minutes per day per active user. Netflix on the same panel sat at 24.8. Prime Video at 26.9. Disney+ at 23. Read that twice. A category most video infrastructure roadmaps still treat as a curiosity is now pulling more daily attention than the streaming services those same roadmaps were built around.

If you are building a video product in 2026, that number should make you nervous for a very specific reason. It is not about content strategy. It is about what kind of video workload your stack is actually designed for, and whether it can survive the shift already happening in mobile-first viewing.

PineDrama and Tadka are the two clearest examples of that shift in the wild, and both tell you something uncomfortable about the assumptions baked into most video infrastructure today.

TikTok shipped PineDrama as a standalone app. JioStar built Tadka as a dedicated section inside JioHotstar. Neither team bolted microdrama onto an existing surface, and that decision is the real signal. Microdrama's workload (sub-90-second episodes, feed-based swipes, per-episode entitlements, sub-second startup every time) breaks the assumptions underneath long-session VOD stacks. If you are building a video product in 2026, you need shorter GOPs and smaller segments in encoding, prefetch as a first-class primitive in your player, prefetch-aware CDN caching, episode-level QoE analytics, and an entitlement service callable from inside the player in under 100 milliseconds. This article walks through exactly what to change, what breaks if you do not, and where PineDrama and Tadka already prove it.

Two signals matter here, and both are about decisions, not metrics.

TikTok already has the largest vertical video surface on the planet. If microdrama were just "TikTok with longer stories," the obvious move would be a new feed tab inside the main app. TikTok did not do that. In January 2026, it shipped PineDrama as a standalone app in the US and Brazil, and by April 2026 the Google Play listing showed more than five million installs, a 4.43 average rating across 322 reviews, and a release cadence measured in weeks.

The reason is workload shape. TikTok's stack is built around UGC clips, session-style feeds, and ad-supported monetization. Microdrama needs per-episode entitlements, coin-based unlocks inside the player, series-level retention tracking, and a production pipeline that looks nothing like user uploads. Bolting those onto the main TikTok surface would have slowed both products. A separate app let TikTok build around the microdrama workload directly instead of retrofitting.

If the company with the most advanced short-form video stack on earth concluded it needed a separate app for this workload, that is a strong signal for every other team still planning to "add a microdrama section" to an existing product.

JioHotstar is built around long-session VOD: subscription entitlement at login, library browsing, recommendations, session-based QoE dashboards. It serves hundreds of millions of monthly viewers. On paper, adding microdrama could have been a new row in the catalog. Instead, JioStar created Tadka as a dedicated microdrama experience inside the app, with 100+ short shows across romance, action, thrillers and sports, and reporting suggesting roughly 1,000 micro shows planned.

The same lesson applies in reverse. A long-session VOD stack cannot fake a feed loop. Per-episode paywalls, sub-second startup, prefetch on swipe, and episode-level retention measurement all sit outside what JioHotstar's main video surface was optimized for. Tadka exists so the microdrama workload gets its own stack and its own metrics, without dragging the main product around.

Two companies, opposite starting points, same conclusion: this workload does not fit the existing shape.

HLS standardized on six-second segments. ABR ladders assume the player has time to negotiate up and down across renditions. Encoder presets and CDN caches were tuned around the idea that a viewer commits to a show for at least a few minutes, so a couple of seconds of startup averages out across the session.

A microdrama episode is sometimes shorter than that buffering budget. The viewer arrives, the first frame has to land in well under a second, the episode plays out, and the swipe to the next one is treated as failure if there is any visible delay. There is no session to amortize startup across. Every episode is a cold start in everything but name.

For a builder, this means rebuffer ratio measured per session will look fine while the actual experience is dying at the per-episode level. Your dashboards report green. Your Play Store reviews report red. PineDrama's top review complaints do not mention encoding, but they do flag friction at the episode boundary (forced login, missing search), which is what happens when the product surface still carries VOD-era assumptions.

Every analytics product in video was built around the session. Time spent per session, bitrate over the session, rebuffer ratio per session, exits before video start per session. Microdrama deletes the session. There is no "pick a thing." There is a feed, and the feed never ends.

Meta and Ormax measured this directly in March 2026 and found that Indian microdrama viewers average around 3.5 hours per week across seven to eight short sessions per day. The session is no longer a meaningful unit because the viewer is not thinking in sessions. They are thinking in swipes.

If you are building, the right unit is the episode impression and the swipe transition. Did the first frame land before the viewer's thumb decided? Did the next episode prefetch in time? Did the viewer drop after one episode of a series, or after seventeen? Series-level retention now matters more than session-length, and none of that fits cleanly into the default dashboards of most video data products today.

Every row of that table is a roadmap item for a team that has not started yet.

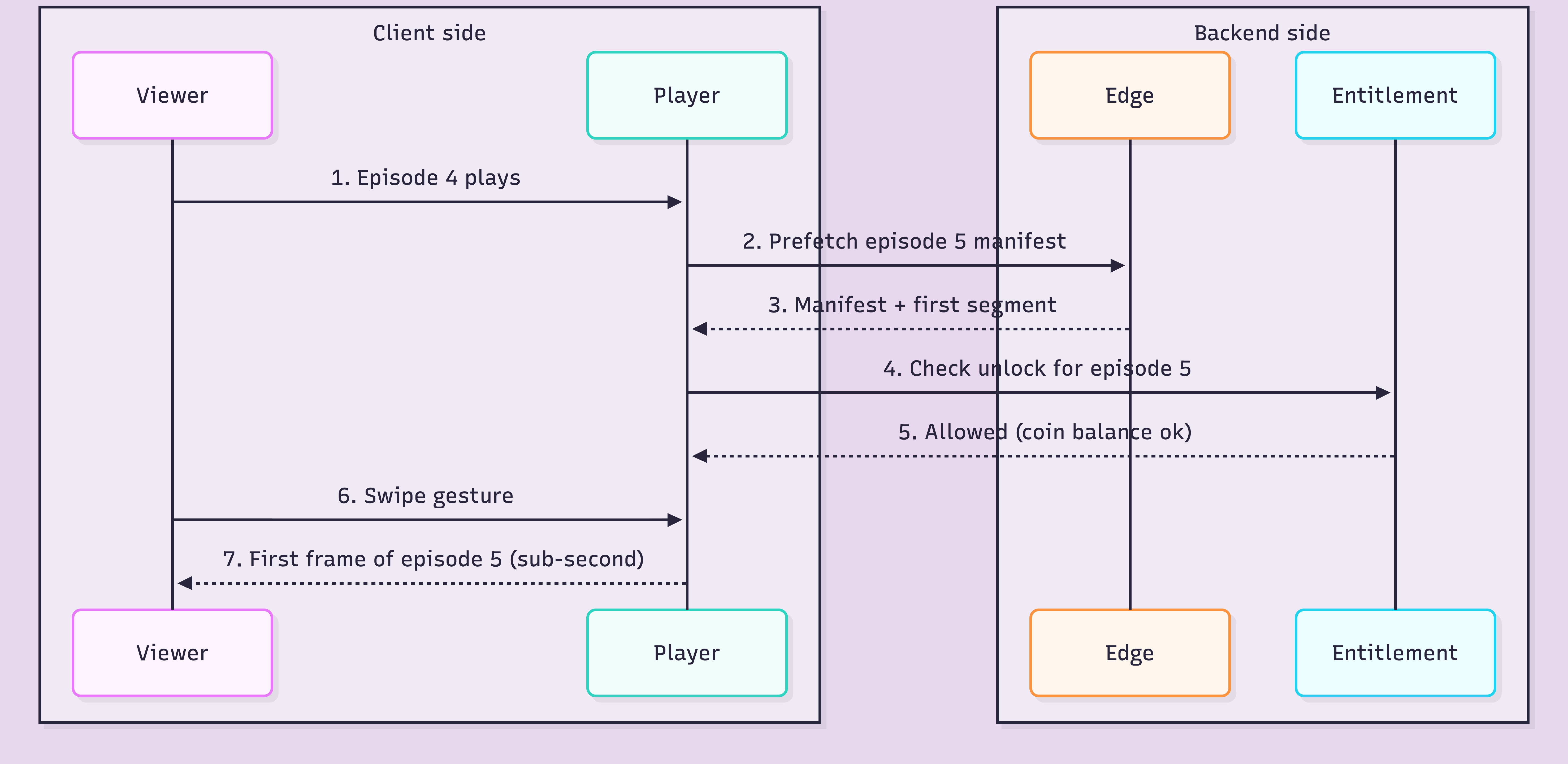

For the last decade, the headline innovation in video delivery was adaptive bitrate. In a feed-based microdrama app, ABR is necessary but no longer sufficient. The harder problem is now prefetch. The player has to fetch the manifest and the first segment of the next episode while the current one is playing, because the swipe gesture is treated as a hard deadline by the viewer.

Prefetch is not a feature you add to a player. It is a primitive the entire pipeline has to expose. The encoder has to align keyframes so the prefetched segment is decodable from the start. The CDN has to honor a different cache priority for prefetch requests. The entitlement service has to be callable mid-feed without blocking the UI thread. Player SDKs that ship without a real prefetch API are simply not microdrama-ready, and that is most player SDKs in 2026.

A microdrama series has 80 chapters. The first 8 are free. After that, every chapter costs coins, and coins are bought in bundles. The unlock decision happens between episode 8 and episode 9, mid-feed, without breaking the swipe loop.

AppsFlyer's State of Subscriptions for Marketers 2026 measured the impact: ad-supported revenue in the category grew from near zero in 2023 to 7.4% in March 2026, while paid installs grew 155% year over year. The category is paid in micro-transactions, and the transaction surface is the player itself.

For a builder, that is a serious change. The unlock check has to be sub-100ms or it stalls the swipe. The coin balance has to be cached on the device but reconciled with the server fast enough that a viewer cannot watch ahead with stale data. DRM license servers have to issue licenses scoped to a single episode, not a whole library. This is why "add a coin economy" is not a weekend project on a VOD-shaped subscription stack.

If you are building a microdrama product (or anything that looks like one), here is exactly what has to change, layer by layer.

Shorter GOPs so the player can start decoding from any prefetched segment without waiting for the next keyframe. Smaller segment durations (2 to 4 seconds) so the startup budget is not blown by one segment boundary. Vertical 9:16 presets as first-class citizens, not a rotated afterthought. Per-title (context-aware) bitrate ladders tuned for sub-90-second episodes, not 45-minute shows.

Prefetch as a real API primitive, not a hack. The player has to expose "prefetch the next N episodes" to the feed layer and handle cache priority internally. Sub-second time-to-first-frame budgeted per episode, not per session. Swipe gesture handling as part of the playback loop, not the UI layer. Native vertical 9:16 rendering without letterboxing.

CDN strategy tuned for prefetch-aware caching, where prefetched segments get different TTLs and eviction rules than on-demand requests. Edge nodes that can serve the first segment of the next episode on a different cache key from the currently-playing one. Signed URLs that are cheap enough to issue per episode, not per session.

Episode impression as the primary event, not session start. Swipe transition as a first-class metric with a latency distribution attached. Series-level retention curves instead of session-length histograms. Prefetch hit and miss rates as QoE metrics, not debug logs. Rebuffer ratio bucketed per episode, not averaged over the feed.

Entitlement service callable from inside the player in under 100 milliseconds. Per-episode license issuance on the DRM server, not per-library. Coin balance cached on device with server reconciliation on every unlock. Unlock UI rendered inside the player, not as a modal above it. Webhooks for unlock events so the catalog and CRM stay in sync.

The tempting move is to reuse the video stack you already have and just ship a new UI on top. Here is what actually happens when you try.

Session-based analytics lie to you. Your dashboards show a healthy average session of 14 minutes with low rebuffer ratio. Your Play Store reviews call the app unwatchable. Both are true, because sessions average over dozens of swipes and hide the per-episode cold starts that are killing you.

Startup latency kills retention silently. A VOD-tuned player takes 1.5 to 2.5 seconds to show the first frame. On a 60-second episode, that is 3% of the content gone before anything happens. Across a fifteen-episode feed, the cumulative friction quietly eats retention, and you will not see it in session metrics.

No prefetch support means stuttered swipes. Without prefetch as a first-class primitive, the player fetches the next episode on swipe, not during playback. Every swipe becomes a visible stutter. Viewers abandon within the opening few episodes and never come back.

Paywall outside the player breaks the feed. When the unlock modal lives in the app shell instead of inside the player, every episode-8-to-episode-9 transition becomes a full-screen context switch. The feed loop dies, the purchase rate drops, and the per-episode economics collapse.

Entitlement checks too slow to be mid-feed. A 400-millisecond entitlement call is invisible at login and fatal mid-swipe. Your DRM and entitlement stack was probably not built to be on the hot path of every episode boundary.

Individually, each of these is a tuning problem. Stacked together, they are the reason TikTok built PineDrama separately and JioStar built Tadka as its own surface.

Use this as a checklist. If any row is missing, your stack is already behind the workload.

The last row matters more than it looks. The hard part of microdrama infrastructure is not any single capability. It is making sure every layer agrees on what the unit of consumption actually is. Stacks stitched together from seven vendors disagree internally, and the disagreement shows up as latency on the swipe.

We built FastPix around a simple premise: the unit of work in video is getting shorter, the unit of consumption is getting shorter, and the stack has to agree on that from the encoder up. For microdrama teams, that translates into vertical 9:16 encoding with per-title ladders, Web/iOS/Android player SDKs built with prefetch in mind, Video Data for episode-level QoE analytics, signed playback with DRM-ready outputs for per-episode entitlement flows, and webhooks on every state change so your catalog and monetization stay in sync without polling. One API surface, usage-based pricing, and the same assumptions about swipes and episodes running through every layer.

If you are planning a PineDrama or Tadka shaped product, FastPix is designed around the workload you are actually building for.

TikTok's existing stack was optimized for UGC clips and session-style feeds. Microdrama needs per-episode entitlements, coin-based unlocks inside the player, and series-level retention tracking. Shipping PineDrama as a standalone app in January 2026 let TikTok build around the microdrama workload directly instead of retrofitting it onto the main TikTok surface.

JioHotstar is built around long-session VOD, with subscription entitlement at login and session-based QoE. Microdrama's feed loop, sub-second startup and per-episode unlock do not fit that shape, so JioStar built Tadka as a dedicated experience with its own stack and metrics.

Session-based analytics hide per-episode cold starts. Startup latency silently kills retention. Players without prefetch support stutter on every swipe. Paywalls outside the player break the feed loop. Entitlement checks that are invisible at login become fatal mid-swipe.

They are already mainstream. Omdia projects global microdrama revenue to grow from $11 billion in 2025 to $14 billion by the end of 2026. Sensor Tower measured 115% year-over-year revenue growth in the category, and AppsFlyer recorded a 155% year-over-year jump in paid installs.

It is the video stack underneath sub-90-second vertical drama apps: vertical 9:16 encoding with shorter GOPs and smaller segments, prefetch-aware player SDKs, episode-level QoE analytics, sub-100ms per-episode entitlement checks, and prefetch-aware CDN caching.